以下为测试环境的实验记录,博文参考自 李振良OK:使用 kubeadm 快速部署一个 Kubernetes 集群

kubeadm 是官方社区推出的一个用于快速部署 kubernetes 集群的工具。

这个工具能通过两条指令完成一个 kubernetes 集群的部署:

1 2 3 4 5 $ kubeadm init $ kubeadm join <Master节点的IP和端口 >

一、安装要求 在开始之前,部署 Kubernetes 集群机器需要满足以下几个条件:

一台或多台机器,操作系统 CentOS7.x-86_x64

硬件配置:2GB或更多RAM,2个CPU或更多CPU,硬盘30GB或更多

集群中所有机器之间网络互通

可以访问外网,需要拉取镜像

禁止swap分区

二、准备环境 【所有节点】

角色

IP

k8s-master1

192.168.100.45

k8s-node1

192.168.100.46

k8s-node2

192.168.100.47

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 $ systemctl stop firewalld $ systemctl disable firewalld $ sed -i 's/enforcing/disabled/' /etc/selinux/config $ setenforce 0 $ swapoff -a $ vim /etc/fstab $ hostnamectl set-hostname <hostname> $ cat >> /etc/hosts << EOF 192.168.100.45 k8s-master1 192.168.100.46 k8s-node1 192.168.100.47 k8s-node2 EOF $ cat > /etc/sysctl.d/k8s.conf << EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF $ sysctl --system $ yum install ntpdate -y $ ntpdate time.windows.com $ /usr/sbin/ntpdate cn.pool.ntp.org

三、安装Docker/kubeadm/kubelet Kubernetes 默认 CRI(容器运行时)为 Docker,因此先安装 Docker。

3.1 安装 Docker 【所有节点】 参考文档安装docker

ansiable 安装docker

1 2 3 ansible-playbook /etc/ansible/roles/dp_docker/docker.yml -vv -e "HOST=192.168.100.45" ansible-playbook /etc/ansible/roles/dp_docker/docker.yml -vv -e "HOST=192.168.100.46" ansible-playbook /etc/ansible/roles/dp_docker/docker.yml -vv -e "HOST=192.168.100.47"

3.2 添加阿里云YUM软件源 【所有节点】 1 2 3 4 5 6 7 8 9 $ cat > /etc/yum.repos.d/kubernetes.repo << EOF [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF

3.3 安装kubeadm,kubelet和kubectl 由于版本更新频繁,这里指定版本号部署:

1 2 $ yum install -y kubelet-1.19.0 kubeadm-1.19.0 kubectl-1.19.0 $ systemctl enable kubelet

kubelet:systemd守护进程管理

kubeadm:部署工具

kubectl:k8s命令行管理工具

四、部署Kubernetes Master 注意: 在192.168.100.45(Master)执行。

4.1、初始化安装master 1 2 3 4 5 6 7 $ kubeadm init \ --apiserver-advertise-address=192.168.100.45 \ --image-repository registry.aliyuncs.com/google_containers \ --kubernetes-version v1.19.0 \ --service-cidr=10.97.0.0/12 \ --pod-network-cidr=10.245.0.0/16 \ --ignore-preflight-errors=all

–apiserver-advertise-address 集群通告地址

–image-repository 由于默认拉取镜像地址 k8s.gcr.io 国内无法访问,这里指定阿里云镜像仓库地址

–kubernetes-version K8s版本,与上面安装的一致

–service-cidr 集群内部虚拟网络, Pod 统一访问入口

–pod-network-cidr Pod网络与下面部署的 CNI 网络组件 yaml 中保持一致

或者使用配置文件引导: config-file 测试的时候采用的是这种方式

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 $ vim kubeadm.conf apiVersion: kubeadm.k8s.io/v1beta2 kind: ClusterConfiguration kubernetesVersion: v1.19.0 imageRepository: registry.aliyuncs.com/google_containers networking: podSubnet: 10.245.0.0/16 serviceSubnet: 10.97.0.0/12 $ kubeadm init --config kubeadm.conf --ignore-preflight-errors=all To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME /.kube sudo cp -i /etc/kubernetes/admin.conf $HOME /.kube/config sudo chown $(id -u):$(id -g) $HOME /.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 192.168.100.45:6443 --token 7px8cc.9k65jhj56g9ufi6a \ --discovery-token-ca-cert-hash sha256:708a063a87d1cd85a4b8dc585c33eb2c4cac02dfa37197f03e6588824ac49ab2

注意:

–token 7px8cc.9k65jhj56g9ufi6a 用于node加入

4.2、拷贝 kubectl 使用的连接k8s认证文件到默认路径: 1 2 3 4 5 6 7 8 mkdir -p $HOME /.kubesudo cp -i /etc/kubernetes/admin.conf $HOME /.kube/config sudo chown $(id -u):$(id -g) $HOME /.kube/config $ kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master1 NotReady master 3m19s v1.19.0

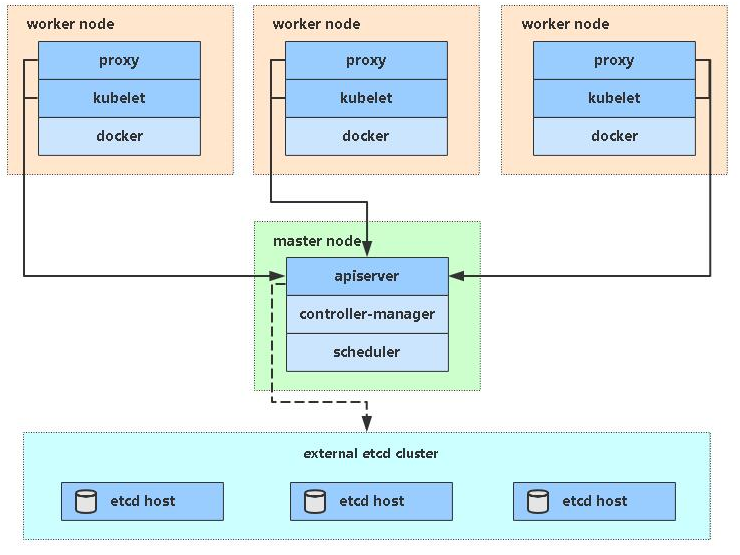

4.3、kubeadm init初始化工作过程:

[preflight] 环境检查和拉取镜像 kubeadm config images pull

[certs] 生成k8s证书和etcd证书 /etc/kubernetes/pki

[kubeconfig] 生成kubeconfig文件

[kubelet-start] 生成kubelet配置文件

[control-plane] 部署管理节点组件,用镜像启动容器 kubectl get pods -n kube-system

[etcd] 部署etcd数据库,用镜像启动容器

[upload-config] [kubelet] [upload-certs] 上传配置文件到k8s中

[mark-control-plane] 给管理节点添加一个标签 node-role.kubernetes.io/master=’’,再添加一个污点[node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] 自动为kubelet颁发证书

[addons] 部署插件,CoreDNS、kube-proxy

部署时遇到常见问题可以用 kubeadm reset 清空当前初始化环境使

五、加入Kubernetes Node

kubeadm-join

注意: 在192.168.100.46,192.168.100.47(Node)执行。

向集群添加新节点,执行在 kubeadm init 输出的 kubeadm join 命令:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 $ kubeadm join 192.168.100.45:6443 --token 7px8cc.9k65jhj56g9ufi6a \ --discovery-token-ca-cert-hash sha256:708a063a87d1cd85a4b8dc585c33eb2c4cac02dfa37197f03e6588824ac49ab2 > --discovery-token-ca-cert-hash sha256:708a063a87d1cd85a4b8dc585c33eb2c4cac02dfa37197f03e6588824ac49ab2 [preflight] Running pre-flight checks [WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd" . Please follow the guide at https://kubernetes.io/docs/setup/cri/ [WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.21. Latest validated version: 19.03 [preflight] Reading configuration from the cluster... [preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml' [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Starting the kubelet [kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap... This node has joined the cluster: * Certificate signing request was sent to apiserver and a response was received. * The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

1 2 3 4 5 kubectl get nodes NAME STATUS ROLES AGE VERSION k8s-master1 NotReady master 12m v1.19.0 k8s-node1 NotReady <none> 60s v1.19.0 k8s-node2 NotReady <none> 45s v1.19.0

默认 token 有效期为24小时,当过期之后,该 token 就不可用了。这时就需要重新创建 token,操作如下:

1 2 3 4 5 6 7 8 9 $ kubeadm token create $ kubeadm token list $ openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed 's/^.* //' 63bca849e0e01691ae14eab449570284f0c3ddeea590f8da988c07fe2729e924 $ kubeadm join 192.168.100.45:6443 --token nuja6n.o3jrhsffiqs9swnu --discovery-token-ca-cert-hash sha256:63bca849e0e01691ae14eab449570284f0c3ddeea590f8da988c07fe2729e924 $ kubeadm token create --print-join-command

六、部署容器网络(CNI)

pod-network

注意 :只需要部署下面其中一个,推荐Calico 。

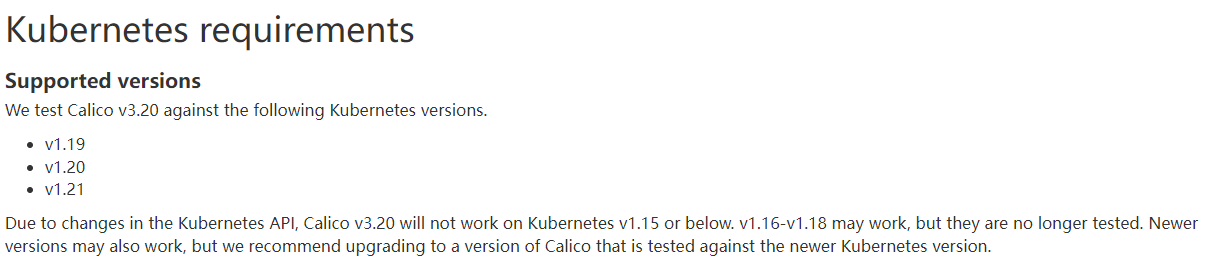

Calico 是一个纯三层的数据中心网络方案,Calico 支持广泛的平台,包括Kubernetes、OpenStack等。

Calico 在每一个计算节点利用 Linux Kernel 实现了一个高效的虚拟路由器(vRouter) 来负责数据转发,而每个 vRouter 通过 BGP 协议负责把自己上运行的 workload 的路由信息向整个 Calico 网络内传播。

此外,Calico 项目还实现了 Kubernetes 网络策略,提供ACL功能。

Quickstart for Calico on Kubernetes

1 $ wget https://docs.projectcalico.org/v3.20/manifests/calico.yaml

下载完后还需要修改里面定义Pod网络(CALICO_IPV4POOL_CIDR),与前面 kubeadm init 指定的一样

修改完后应用清单:

1 2 $ kubectl apply -f calico.yaml $ kubectl get pods -n kube-system

七、测试kubernetes集群 7.1 kubectl get cs:STATUS Unhealthy 报错 查看 Master 组件状态提示 Unhealthy 报错

1 2 3 4 5 6 $ kubectl get cs Warning: v1 ComponentStatus is deprecated in v1.19+ NAME STATUS MESSAGE ERROR scheduler Unhealthy Get "http://127.0.0.1:10251/healthz" : dial tcp 127.0.0.1:10251: connect: connection refused controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz" : dial tcp 127.0.0.1:10252: connect: connection refused etcd-0 Healthy {"health" :"true" }

原因是 controller-manager 和 scheduler 配置文件中 –port=0 参数默认设置为0,导致 apiserver 与组件端口通信获得 Master组件状态,将其注释

1 2 3 4 5 6 7 $ vim /etc/kubernetes/manifests/kube-controller-manager.yaml $ vim /etc/kubernetes/manifests/kube-scheduler.yaml $ systemctl restart kubelet

7.2 使用Nginx镜像测试kubernetes集群

验证Pod工作

验证Pod网络通信

验证DNS解析

在 Kubernetes 集群中创建一个 Nginx Pod,验证是否正常运行:

1 2 3 4 5 6 7 8 9 10 11 12 $ kubectl create deployment nginx --image=nginx $ kubectl create pod nginx --image=nginx $ kubectl expose deployment nginx --port=80 --type =NodePort $ kubectl get pod,svc http://NodeIP:Port

至此 kubernetes 单 Master 集群部署完成。

报错1 2 3 4 5 6 7 8 9 10 kubectl get pods NAME READY STATUS RESTARTS AGE nginx-6799fc88d8-tvrbw 0/1 ContainerCreating 0 8h kubectl describe pod nginx-6799fc88d8-tvrbw · · · Failed to create pod sandbox: rpc error: code = Unknown desc = failed to set up sandbox container "717bf2b3d47ca49d78697be84bcfd46c06b8a1a31ba3b780852470c92fa495d4" network for pod "nginx-6799fc88d8-tvrbw" : networkPlugin cni failed to set up pod "nginx-6799fc88d8-tvrbw_default" network: stat /var/lib/calico/nodename: no such file or directory: check that the calico/node container is running and has mounted /var/lib/calico/

解决

calico 没装好

查看确认calico 状态,发现状态异常

1 2 3 4 5 6 7 8 kubectl get pods -n kube-system -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES calico-kube-controllers-577f77cb5c-m6z6z 0/1 ContainerCreating 0 9h <none> k8s-node2 <none> <none> calico-node-2xwnp 0/1 ImagePullBackOff 0 9h 192.168.100.45 k8s-master1 <none> <none> calico-node-rft5r 0/1 ImagePullBackOff 0 9h 192.168.100.46 k8s-node1 <none> <none> calico-node-vdc4p 0/1 ImagePullBackOff 0 9h 192.168.100.47 k8s-node2 <none> <none> coredns-6d56c8448f-9hhjp 0/1 ContainerCreating 0 9h <none> k8s-node2 <none> <none> coredns-6d56c8448f-x8tzg 0/1 ContainerCreating 0 9h <none> k8s-node2 <none> <none>

查看详情,确认是镜像拉取失败

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 kubectl describe pods calico-node-2xwnp -n kube-system ··· ··· ··· Image: docker.io/calico/node:v3.20.6 Image ID: Port: <none> Host Port: <none> State: Waiting Reason: ImagePullBackOff ··· ··· ··· Warning Failed 9m42s (x85 over 9h) kubelet, k8s-master1 Error: ErrImagePull Warning DNSConfigForming 5m41s (x1833 over 9h) kubelet, k8s-master1 Nameserver limits were exceeded, some nameservers have been omitted, the applied nameserver line is: 58.22.96.66 218.104.128.106 223.5.5.5

安装pod网络插件-安装calico插件

1 kubectl apply -f https://docs.projectcalico.org/v3.20/manifests/calico.yaml

在这一步容易出现拉取calico镜像失败的问题,手动拉取:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 docker pull quay.io/calico/node:v3.20.6 docker pull mirrorgooglecontainers/kube-apiserver:v1.13.2 docker pull mirrorgooglecontainers/kube-proxy:v1.13.2 docker pull mirrorgooglecontainers/kube-controller-manager:v1.13.2 docker pull mirrorgooglecontainers/kube-scheduler:v1.13.2 docker pull mirrorgooglecontainers/coredns:1.2.6 docker pull mirrorgooglecontainers/etcd:3.2.24 docker pull mirrorgooglecontainers/pause:3.1 docker pull mirrorgooglecontainers/kubernetes-dashboard-amd64:v1.10.0 docker pull shikanon096/traefik:1.7.5 docker pull shikanon096/gcr.io.kubernetes-helm.tiller:v2.12.0 docker pull mirrorgooglecontainers/addon-resizer:1.8.4 docker pull mirrorgooglecontainers/metrics-server-amd64:v0.3.1 docker pull quay.io/calico/cni:v3.3.2 docker pull quay.io/calico/node:v3.3.2 docker pull quay.io/calico/typha:v3.3.2

删除旧的pod

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 kubectl delete -f calico.yaml vim calico.yaml kubectl apply -f calico.yaml kubectl get pods -n kube-system -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES calico-kube-controllers-749bdb99d7-j2bl7 1/1 Running 2 3m47s 10.245.169.129 k8s-node2 <none> <none> calico-node-88jsl 1/1 Running 0 3m47s 192.168.100.46 k8s-node1 <none> <none> calico-node-nbnxw 1/1 Running 0 3m47s 192.168.100.47 k8s-node2 <none> <none> calico-node-wztnw 1/1 Running 0 3m47s 192.168.100.45 k8s-master1 <none> <none> coredns-6d56c8448f-772vz 1/1 Running 0 9m34s 10.245.169.130 k8s-node2 <none> <none> coredns-6d56c8448f-g5c7k 1/1 Running 0 9m50s 10.245.36.65 k8s-node1 <none> <none> etcd-k8s-master1 1/1 Running 0 11h 192.168.100.45 k8s-master1 <none> <none> kube-apiserver-k8s-master1 1/1 Running 0 11h 192.168.100.45 k8s-master1 <none> <none> kube-controller-manager-k8s-master1 1/1 Running 0 11h 192.168.100.45 k8s-master1 <none> <none> kube-proxy-7w9tk 1/1 Running 0 11h 192.168.100.47 k8s-node2 <none> <none> kube-proxy-fx99k 1/1 Running 0 11h 192.168.100.46 k8s-node1 <none> <none> kube-proxy-rrqlf 1/1 Running 0 11h 192.168.100.45 k8s-master1 <none> <none> kube-scheduler-k8s-master1 1/1 Running 0 11h 192.168.100.45 k8s-master1 <none> <none> kubectl get pods -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES nginx-6799fc88d8-tvrbw 1/1 Running 0 11h 10.245.36.66 k8s-node1 <none> <none> kubectl get pod,svc NAME READY STATUS RESTARTS AGE pod/nginx-6799fc88d8-tvrbw 1/1 Running 0 11h NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 11h service/nginx NodePort 10.96.48.7 <none> 80:31882/TCP 11h

八、部署 Dashboard https://gitcode.net/mirrors/kubernetes/dashboard?utm_source=csdn_github_accelerator

1 kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

1 $ wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.3/aio/deploy/recommended.yaml

默认 Dashboard 只能集群内部访问,修改 Service 为 NodePort 类型,暴露到外部:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 $ vim recommended.yaml ... kind: Service apiVersion: v1 metadata: labels: k8s-app: kubernetes-dashboard name: kubernetes-dashboard namespace: kubernetes-dashboard spec: ports: - port: 443 targetPort: 8443 nodePort: 30001 selector: k8s-app: kubernetes-dashboard type: NodePort ... $ kubectl apply -f recommended.yaml $ kubectl get pods -n kubernetes-dashboard NAME READY STATUS RESTARTS AGE dashboard-metrics-scraper-6b4884c9d5-gl8nr 1/1 Running 0 13m kubernetes-dashboard-7f99b75bf4-89cds 1/1 Running 0 13m

访问地址:https://NodeIP:30001

创建 service account 并绑定默认 cluster-admin 管理员集群角色:

1 2 3 4 5 6 $ kubectl create serviceaccount dashboard-admin -n kube-system $ kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin $ kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}' )

使用输出的 token 登录 Dashboard

参考资料1 参考资料2 参考资料3